de la variable x et du caractre dise utilis comme sparateur. So, is there an intuitive way to visualize a complex hyperplane? For concreteness, you can assume that the space is a finite dimensional Hilbert space. Une premire srie de rsultat est lobtention dun CS-sparateur. Note that I am an undergraduate so I'd really appreciate some not too advanced answers (stuff like Hopf fibration would be considered too advanced for me, for example).

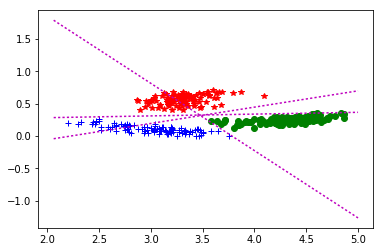

darrangements dhyperplans, de programmation linaire, de polynme de Tutte. Since this has gotten bumped, and may be useful to posterity: A hyperplane separating the two classes might be written as in the two-attribute case, where a1 and a2 are the attribute values and there are three weights wi to be learned. However, the equation defining the maximum-margin hyperplane can be written in another form, in terms of the support vectors. Literally, a complex hyperplane in a finite-dimensional complex vector space is (i) a real subspace of real codimension two that (ii) is closed under complex scalar multiplication. How are we to think about this geometrically? equation (1) Here, w is a weight vector and w0 is a bias term (perpendicular distance of the separating hyperplane from the origin) defining separating hyperplane. In 2D, the separating hyperplane is nothing but the decision boundary. So, I took following example: w 1 2, w0 w. (i) The picture of a real hyperplane $H$ dividing the ambient space $V$ into two half-spaces can be understood by picking a complementary real one-dimensional complement $N$. (In an inner product space we'd often take $N = H^$ is therefore "usually" not equal to $H$, and is therefore not a complex hyperplane. The point is, complex hypersurfaces really are Very Special among real subspaces of real codimension two. In fancy terms, there is a real two-sphere's worth of complex hyperspaces, the complex projective line, but a product-of-two-spheres' worth of real two-planes, the unoriented Grassmannian.I was wondering if I can visualize with the example the fact that for all points $x$ on the separating hyperplane, the following equation holds true: import matplotlib.pyplot as plt from sklearn import svm from sklearn.datasets import makeblobs from sklearn.inspection import decisionboundarydisplay we create 40 separable points x, y makeblobs(nsamples40, centers2, randomstate6) fit the model, dont regularize for illustration purposes clf svm. Download scientific diagram 2-Hyperplan sparateur optimal qui maximise la marge dans lespace de redescription. In the histogram and box plots it looks like almost all of the points have a distance of either exactly positive or negative one with nothing between them.$$w^T.x w_0=0\quad\quad\quad \textx 2.5$. X=grid.best_estimator_.decision_function(data) Grid=GridSearchCV(svc,param_grid=param_grid, cv=cv,n_jobs=4,iid=False, refit=True) Svc=SVC(kernel='linear,probability=True,decision_function_shape='ovr')Ĭ_range= svc=SVC(kernel='linear,probability=True,decision_function_shape='ovr') I'm sure I'm calling decision_function() incorrectly but not sure how to do this really.

My problem is that in the histogram and the boxplot these look perfectly seperable shich I know is not the case. After that though I went to get the relative distances from the hyper-plane for data from each class using grid.best_estimator_.decision_function() and plot them in a boxplot and a histogram to get a better idea of how much overlap there is. After fitting the data using the gridSearchCV I get a classification score of about. I'm currently using svc to separate two classes of data (the features below are named data and the labels are condition).

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed